Is 0 a natural number?

When I was still teaching, several of my students asked me this: Is 0 a natural number?

Short Answer

It depends on the convention. In some references, the set of natural numbers includes 0. In other references, the set of natural numbers does not include 0.

Long Answer

When we define a particular mathematical concept, we come to some sort-of agreement. For example, we might want to call the sequence 1, 4, 7, 10, … “cool numbers.” If all of us agree on this, we will have no problem. However, other group of uncool guys might give it another name. Or, they might keep the name, but change the sequence. This was what happened to the set of natural numbers.

As mathematics flourished, mathematicians or well-known mathematical organizations needed to agree on conventions such as definition of terms or unit of measures to use. This is to be sure that when they talk about some mathematical concept, they mean the same thing.

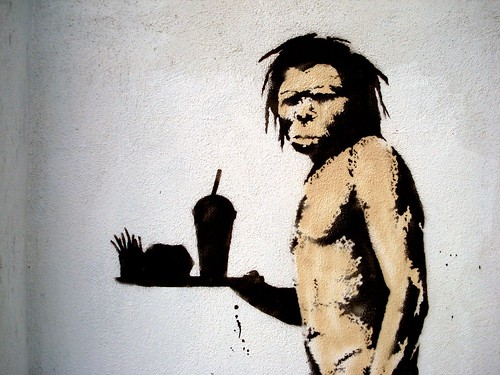

However, this was not always the case. Due to many organizations, some definitions have slightly different meanings. For instance, it just so happened that some group of mathematicians defined natural numbers to be 1, 2, 3, 4, …, while others defined it to be 0, 1, 2, 3, 4, …. The first definition, however, is more popular because it is more natural and logical to count from 1. A caveman from 10 000 BC would probably ask us about zero, “Why would you tally if there is nothing to tally?” There is another reason in set theory why the first definition is more popular, but its beyond the scope of this blog, so will not discuss it anymore.

So, who is right?

There is no right or wrong in the two definitions above. Both are accepted by both the mathematics and the mathematics education communities. As long as we are consistent, then there is nothing to worry about.

If you are a student, taking up number theory or similar subjects, then just be clear with your professor of the definition, or look at the definition at the glossary of your text book.